|

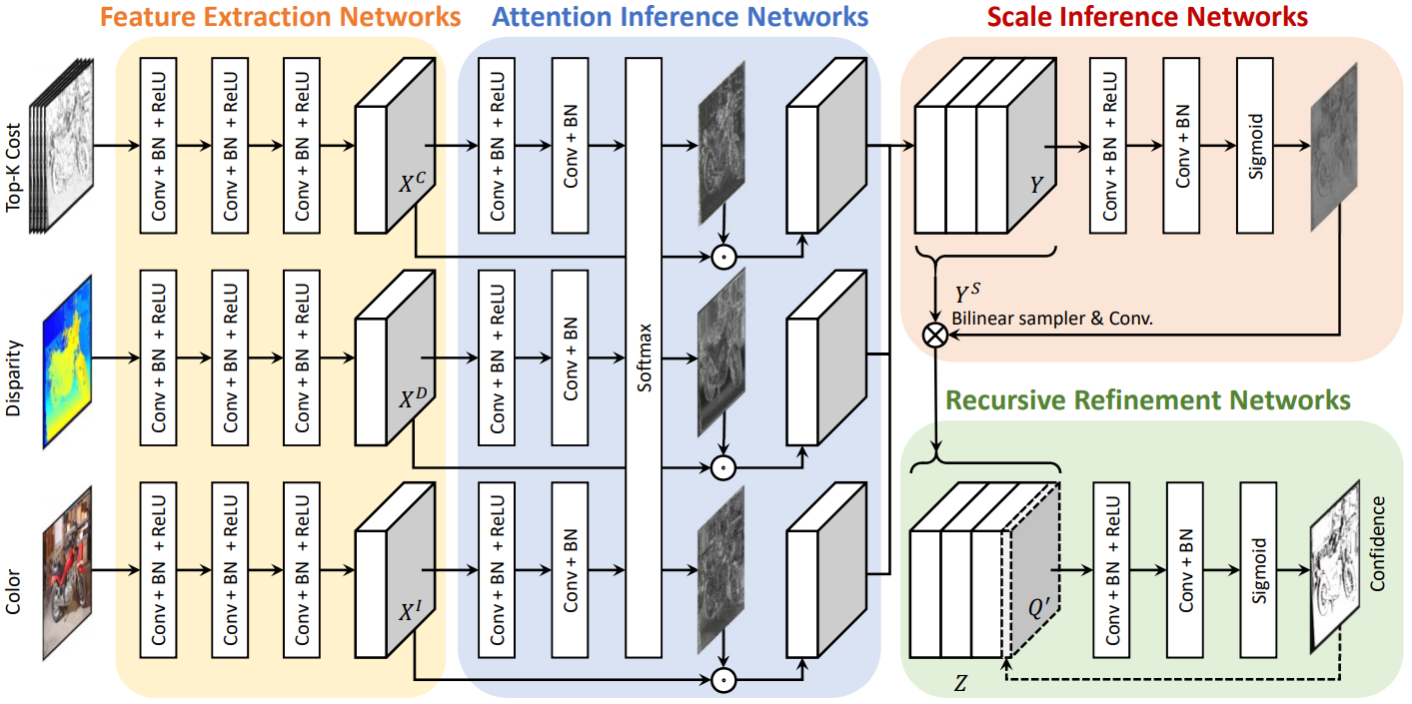

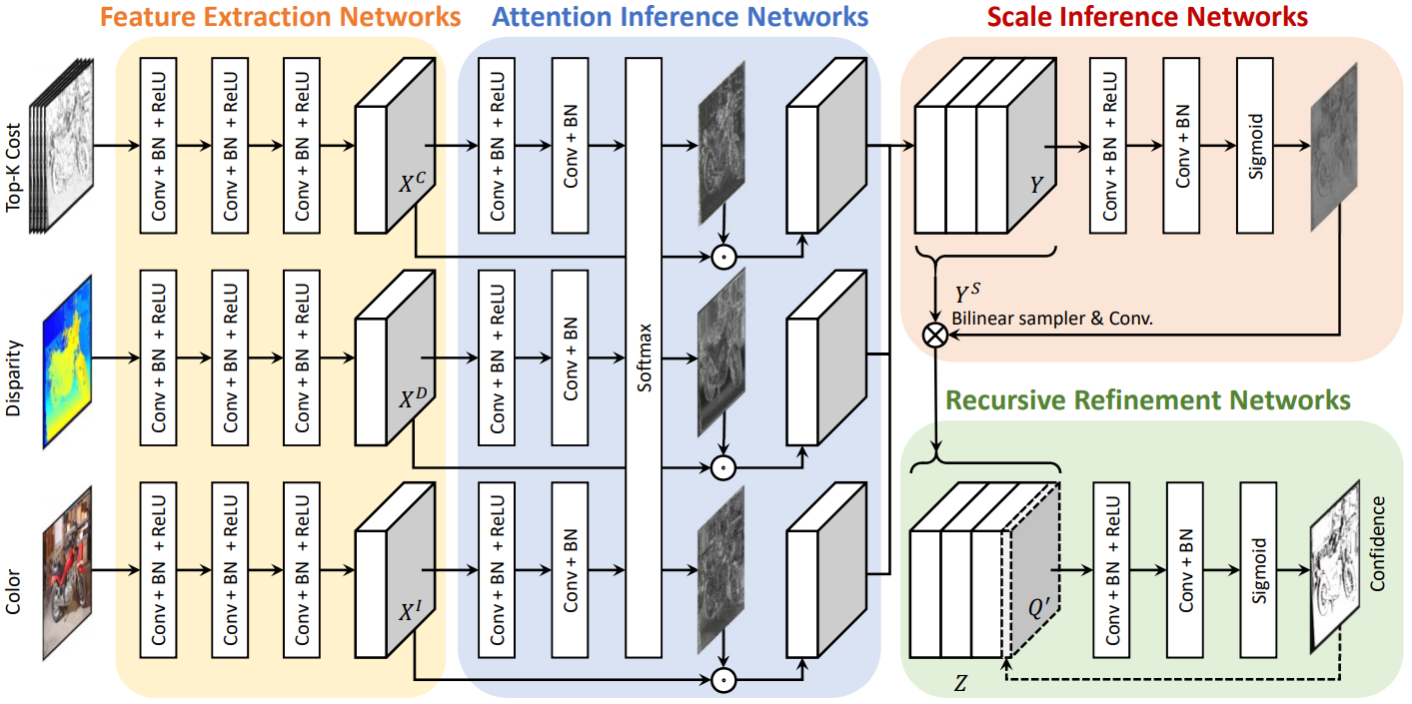

The network configuration of LAF-Net which consists of four sub-networks, including feature extraction networks, attention inference networks, scale inference network, and recursive refinement networks. Given matching cost, disparity, and color image as input, our networks output confidence of the disparity.

|

|

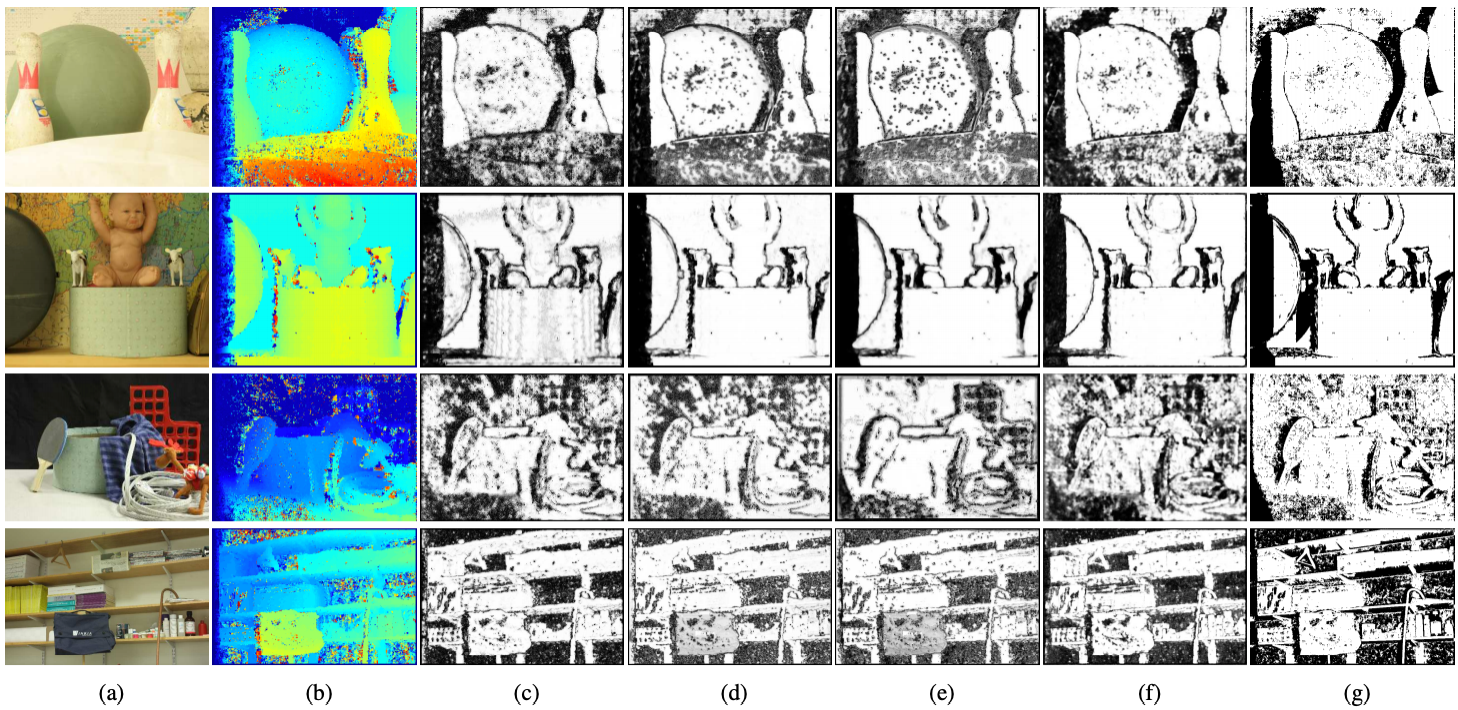

The confidence maps on MID 2006 dataset [34] (first two rows) and MID 2014 dataset [33] (last two rows) using census-SGM and MC-CNN. (a) color images, (b) initial disparity map, (c)-(f) are estimated confidence maps by (c) Kim et al. [21], (d) LFN [7], (e) LGC-Net [40], (f) LAF-Net, and (g) ground-truth confidence map.

|